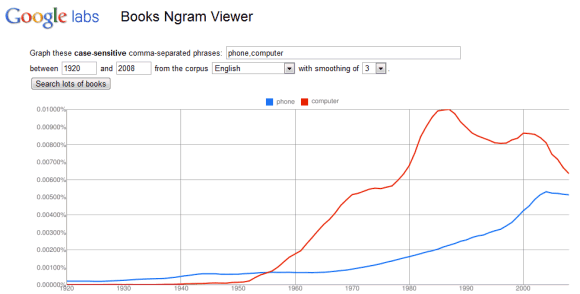

"The datasets we're making available today to further humanities research are based on a subset of that corpus, weighing in at 500 billion words from 5.2 million books in Chinese, English, French, German, Russian, and Spanish. The datasets contain phrases of up to five words with counts of how often they occurred in each year. (...) The Ngram Viewer lets you graph and compare phrases from these datasets over time, showing how their usage has waxed and waned over the years," says Jon Orwant, from the Google Books team.

The nice thing is that the raw data is licensed as Creative Commons Attribution and can be downloaded for free. Maybe Google should use the same license for the Ngram database obtained from indexing the web.

This is the best thing ever!

ReplyDeleteI just discovered an easter egg in it.

ReplyDeleteTry and search for: never,gonna,give,you,up

and guess which result is going to appear?!?

here's THE answer: http://goo.gl/K75JI http://ngrams.googlelabs.com/graph?content=never,gonna,+give,you,up&year_start=1500&year_end=2008&corpus=5&smoothing=0

:D

cheers

Federico Vitiello

Hi

ReplyDeleteYou are doing a great job to the humanity and helping to spread knowledge and sharing knowledge that is in the spirit of Google.

http://ngrams.googlelabs.com/graph?content=television,radio,film,web&year_start=1900&year_end=2008&corpus=6&smoothing=1

ReplyDeleteWill smaller languages like swedish and finnish also become available?

ReplyDeleteThanks for this great new toy!

ReplyDeleteGoogle's "Books Ngram Viewer" is another free online tool that allows us to visualize information in new ways. I explore how to use the tool in the classroom to help students better understand the research method in my blog post - "How To Quantify Culture? Explore 500 Billion Published Words With Google's Ngram Viewer" http://bit.ly/gcKJdp

PS - It includes a Rickrolling Easter Egg - Search for "never gonna give you up" and see what pops up!

Sharing Is Caring

ReplyDeleteJust tried it out for whether to use 'spelled' or 'spelt', 'burned' or 'burnt' and 'learned' or 'learnt'. Results here: http://bit.ly/hkk2h9

ReplyDeleteReally useful tool. Great for procrastinating purposes too!

Johanna

Combine NGrams with Wordle.net(creates word clouds out of text, allowing writers--specifically fiction writers--to see words they're using too much). Combined with N-Grams, you can figure out which words in your manuscript need to be cut, and which are common enough in the rest of historical literature to ignore.

ReplyDeleteThe Writeup is here: http://philipisles.blogspot.com/2011/01/combining-ngrams-and-worldle.html

When is Ngram Viewer going to be updated? It's stuck to 2008..

ReplyDeleteAnd I have a feeling all of the old books aren't being updated either.

I hope it doesn't disappear like Google Sets did...

The one unfortunate thing I've noticed about the Ngram Viewer is that many early works appear to be, for lack of a better term, mistranslated. I found some very curious, unexpected spikes for certain words, and when I went to investigate, I found that many letters are "read" wrong. The worst case is probably that of the long S, which looks to their OCR software, (and to the naked eye), to be an F.

ReplyDeleteIf you can think of a common four-letter word beginning with an F, one which you might not expect to see much in 17th and 18th century literature, and compare it's frequency with an even more common four-letter word beginning in S, but otherwise sharing three letters, you might note first, unexpected spikes for this word, then that their frequency converges, then diverges in the opposite direction, right at the beginning of the 19th century when use of the long S began to go out of fashion.

Less commonly, (and less amusingly), A is often read as I.